We don't sell "an AI agent." We field crews.

A single agent in production is a fragile thing. A small crew — narrow agents, a human editor, a real eval harness — is a system you can ship and audit. Below: the six crews running inside our studio. Each runs against the same studio rule of thumb.

1 crew : 1 outcome

1 human : 1 final approval

1 eval : every commit, no exceptions.

Six scouts sweep CN, SEA and SG weekly for grants, tenders, regulatory triggers, partnerships. A verifier defends every claim against its source documents.

- scouts.agent (×6) — grants · tenders · corporate · regulatory · events · academic

- editor.agent — dedupe, score fit/urgency/value, EN ⇌ 简中

- verifier.agent — multi-source corroboration + deadline plausibility

- BD owner (human) — promotes verified opportunities into CRM

A research desk, not a generator. Nine stages, ten specialists, source-tier registry. Every DOI cross-checked at Crossref, every URL fetched live. Bilingual.

- writers.agent (×7) — brainstorm · outline · research · draft · refine · humanize · translate

- fact-check.agent — claim-coverage gate, verbatim quote check

- reference-verifier.agent — DOI/Crossref, URL liveness, source-tier enforcement

- editor (human) — reads every piece end-to-end before publish

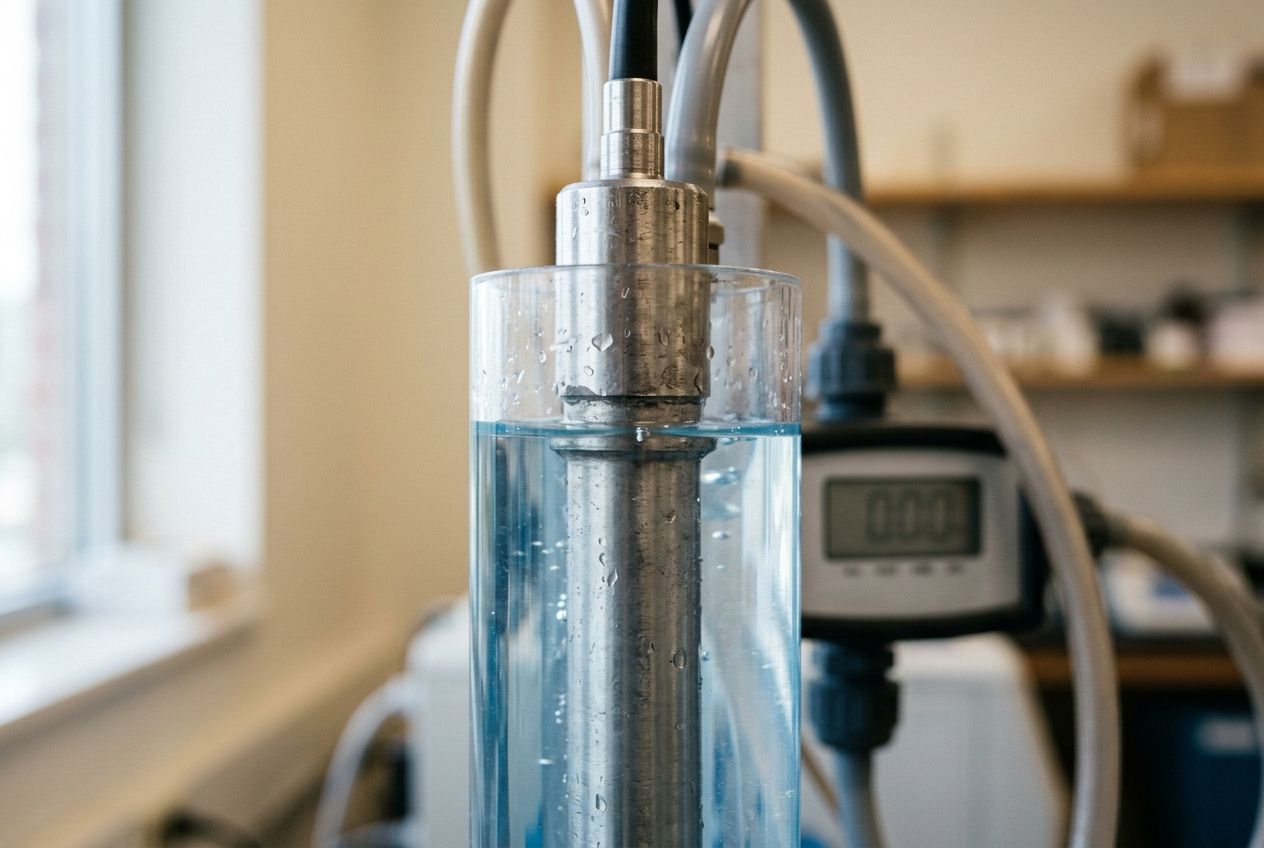

Sensor data → adaptive control loops → reports the regulator can read. Embedded with WaterDoctor, an NUS-spinoff building AI-integrated biofilm reactors. The hard kind of agentic.

- ingestor.agent — in-tank probes (DO, pH, ammonia) + lab assays

- controller.agent — adaptive treatment loop with safety envelopes

- reporter.agent — auditable run summaries

- two senior wGrow engineers, on the ground

Hero shots, lifestyle scenes, variant covers, social crops — brand-consistent and listing-ready. One brief in, a packaged set out, no manual rework downstream.

- brief.agent — product → shot list (hero, lifestyle, variants)

- renderer.agent — frames generated against the brand reference set

- grader.agent — brand-fit scoring, drift removed, set packaged

- art-director (human) — signs off the set before it ships

Scans a target market by category, ranks SKUs by selling range, surfaces qualified suppliers, and flags compliance friction before procurement opens a PO.

- scanner.agent — category sweep across marketplaces and TAM signals

- pricer.agent — selling-range bands, margin map, demand curve

- sourcer.agent — supplier shortlist with MOQ, lead times, certifications

- buyer (human) — signs every shortlist before a PO opens

Five specialists run the monthly close, draft the SFRS pack, and prepare GST F5 / Form C-S / AGM / ACRA filings for a Singapore accounting-firm caseload. A chartered accountant signs every figure.

- ledger.agent · close.agent — book-keep, reconcile to bank, accrue, depreciate

- reporting.agent — SFRS financial statements: P&L · balance sheet · cash flow

- tax.agent · governance.agent — GST F5 · Form C-S · ECI · AGM · ACRA AR · XBRL

- chartered accountant (human) — signs every figure, signs every filing

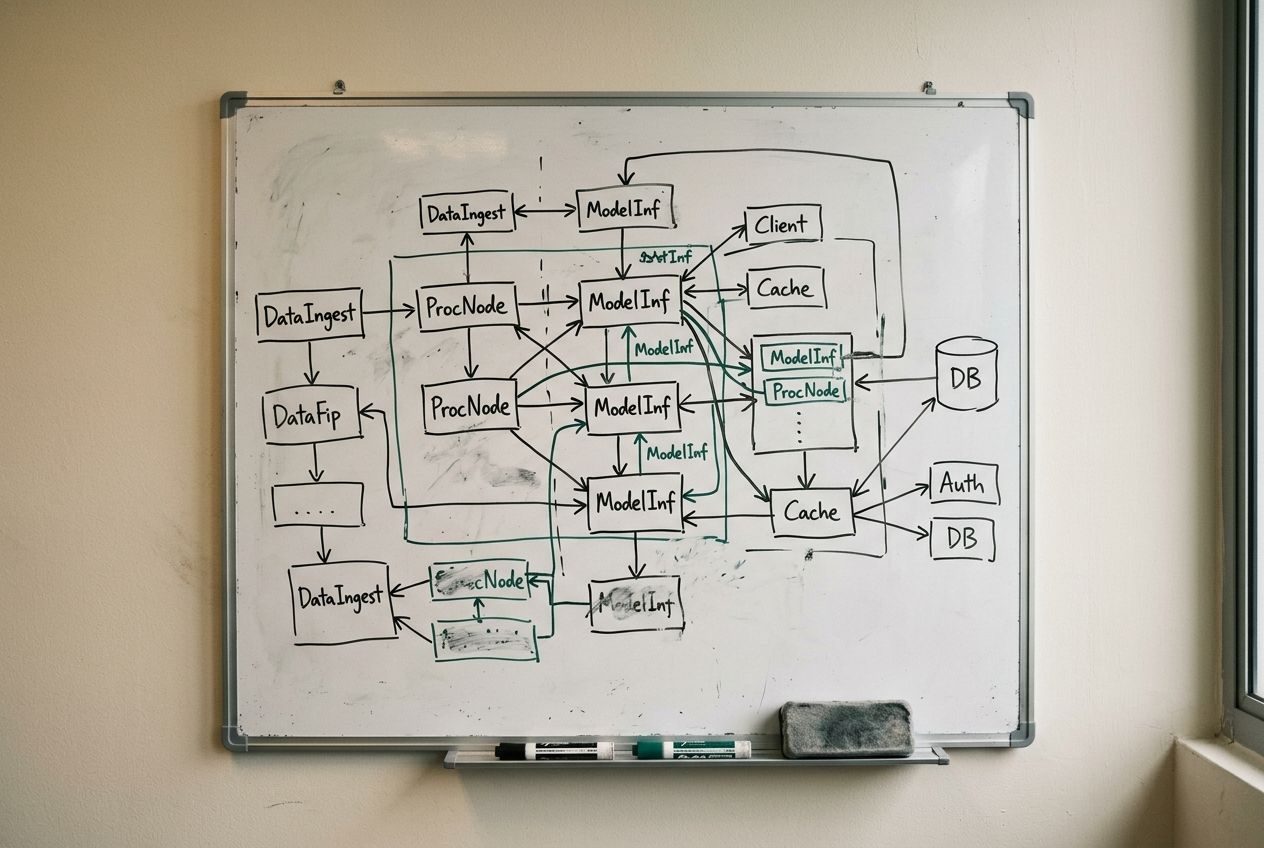

A crew is a memory architecture with agents on top.

Three things separate a crew you can run for a year from a crew you can run for a week: how memory is layered, how agents talk to each other, and what tends to state in the background. This is the shape of our default stack — not the recipe.

Four tiers, one budget per tier.

Most "agent memory" is a single context window stuffed until it overflows. We separate memory by lifetime and write policy, so an agent can hold the things that don't change without paying for them on every turn.

Each tier has its own retention rule, write authority, and size budget. Each agent in a crew reads from all four; only the curator (next section) writes across them.

Pinned. Rarely written, never forgotten. The thing the agent is, before it knows what task it's on — its remit, its no-go list, the voice it speaks in.

Client conventions, past decisions, prior outcomes, names that matter. Curated in by a separate agent, curated out when stale. Not a dumping ground — every entry earns its place.

Hot, cheap, disposable. Holds intermediate reasoning, partial drafts, tool outputs the agent is still chewing on. Cleared when the task closes — unless the curator promotes a fragment up the stack.

Schemas, glossaries, run histories, eval results, ground-truth datasets. Read by every agent in the crew, so no two agents disagree on a fact. Written only by the curator and the human editor — the boundary that keeps drift out.

Machine-to-machine, not English-to-English.

Most agents talk to each other in English. Ours don't, when they don't have to. Reasoning steps stay in natural language. Telemetry, status, routing, and structured handoffs go over a typed channel.

Tokens are not free. Latency is not free. A crew that runs all day pays for every word it doesn't need to say.

"Hi controller — finishing the ingestion pass for batch 0421. The pH readings looked stable across the four sensors I checked, with the third one showing a slight drift around 0.2 above baseline that you might want to flag in the next run. Lab values from this morning are attached as a CSV..."

{

batch: "0421",

status: "ok",

drift: { sensor: 3, dpH: 0.2 },

lab: "ref://lab/0421"

} Same handoff. Different cost surface. Real wGrow numbers go in the briefing, not the brochure.

Memory rots if nobody tends it.

Every crew runs a curator agent. Its only job is to score what gets written, promote what proves useful, demote what goes stale, and prune the rest. The same loop your brain runs when you sleep — except this one has logs.

Without a curator, long-term memory becomes a haunted attic. With one, it stays small, recent, and relevant — which is the only state in which it earns its tokens.

→ long-term

→ cold cache

→ /dev/null

Why we field crews, not single agents.

One agent doing five jobs is five prompts braided together. It will fail, and you won't know which of the five failed. A crew separates the jobs so each one has its own eval, its own budget, and its own failure mode.

The lead routes. The specialists do the work. The shared layer is the only thing they all touch. The human is the final gate. None of these are interchangeable.

1 curator · 1 eval / commit

Anthropic Claude, OpenAI, Gemini — model-agnostic. Postgres + a vector store for memory. Eval harness on every commit.

Audited workflows, regulated data, long-running loops. Singapore-context compliance: PDPA, MAS, IMDA.

Single-agent demos. Chatbots without an outcome. "AI strategy" without an eval.

A one-week diagnostic. Crew shape, eval plan, weekly cadence on the table at the end of it.

What buyers actually ask.

Ten questions we hear in every first call — about crews, memory, models, compliance, and how engagements actually start. If yours isn't here, brief a crew.

01 What's the difference between a single AI agent and an "agent crew"?

A single agent doing five jobs is five prompts braided together — when it fails, you don't know which of the five failed. A crew separates the jobs: one narrow agent per task, one lead to route, one curator to tend memory, one human to approve. Each piece has its own eval, its own budget, and its own failure mode.

02 Why not just use ChatGPT or one large agent with tools?

Generalist agents demo well and ship badly. The moment you put one in production with real telemetry the failure modes interleave: bad retrieval poisons drafting, bad drafting fools the checker, and the trace is unreadable. Crews stay debuggable because each agent has one job and one eval. We use the big models — we just don't ask any single instance to do five things at once.

03 How does the four-tier memory architecture prevent context bloat?

Most "agent memory" is one context window stuffed until it overflows. We separate by lifetime: tier-01 (charter, pinned), tier-02 (long-term project facts, curator-written), tier-03 (per-task scratchpad, disposable), tier-04 (shared knowledge base, read by every agent in the crew). Each tier has its own retention rule, write policy, and size budget. Nothing leaks across boundaries without the curator's approval.

04 What is the "curator" agent and why does every crew need one?

Memory rots if nobody tends it. The curator is a dedicated agent whose only job is to score what gets written, promote what proves useful, demote what goes stale, and prune the rest. Without one, long-term memory becomes a haunted attic of contradictory facts. With one, it stays small, recent, and relevant — which is the only state in which it earns its tokens.

05 Why do your agents use structured messages instead of natural language?

Tokens aren't free. Latency isn't free. A 60-token prose handoff and a 22-token typed handoff can carry the same payload — but one parses on the wire and the other has to be re-read. We keep reasoning steps in natural language (where they belong) and push telemetry, status, and routing onto a typed channel. A crew that runs all day pays for every word it doesn't need to say.

06 How do humans stay in the loop without becoming the bottleneck?

The human is the final gate — approve, edit, or reject — not a step on every turn. Agents do the research, drafting, checking, and routing autonomously. The human sees a packaged outcome with citations and a diff, then signs off (or sends it back). For the Article Crew that's an editor on every piece. For BD Crew it's a person approving every send. For WaterDoctor it's senior wGrow engineers on the ground.

07 Are your crews compliant with Singapore's PDPA, MAS, and IMDA requirements?

That's our comfort zone. We work with audited workflows, regulated data, and long-running loops — designed with PDPA, MAS, and IMDA in mind from the first whiteboard session. Every crew run is logged, every memory write is attributable, every human approval is recorded. The audit trail isn't a feature we bolt on; it's the substrate the crew runs on.

08 Which LLM providers do you use? Are crews locked to one vendor?

Model-agnostic by default. We build on Anthropic Claude, OpenAI, and Gemini, and pick per agent based on what each role needs — long-context reasoning, structured output, cost. Memory lives in Postgres and a vector store we control, not inside the model provider. If a provider changes pricing, deprecates a model, or goes down, we swap. No single vendor owns your crew.

09 How long does it take to ship a crew into production?

Most engagements start in 2–3 weeks. We open with a one-week diagnostic — crew shape, eval plan, weekly cadence on the table at the end of it. From there the first crew is usually running against real data inside a month, behind a human gate. We don't ship single-agent demos, and we don't ship without an eval harness on commit.

10 Do you build crews for clients, or also embed engineers with the team?

Both. For most engagements we design the crew, ship it, and hand over with an eval plan you can run yourself. For deeper work — like our WaterDoctor engagement — two senior wGrow engineers embed with the client team on the ground: sensor data, control loops, regulator-readable reports. The crew model is the same; the operating model is different.

Want a crew embedded with you?

We typically run a one-week diagnostic, then propose a crew shape, eval plan and weekly cadence. Most engagements start in 2–3 weeks.

Brief a crew →